Most organizations have AI principles. A PDF, approved at executive level, published on the intranet, referenced in the annual report. What most organizations do not have is AI governance that executes at runtime: controls that actually prevent the disallowed action, routes that actually direct the work to the right model, and monitors that actually detect drift from policy. The gap between principles and execution is where incidents happen.

Key Takeaways

- 63% of organizations lacked AI governance policies at the time of their breach; 97% of AI breaches lacked proper access controls

- Only 37% of organizations have AI governance policies; fewer still have operational controls implementing them

- 47% of CISOs have observed AI agents exhibiting unintended behavior; 80% of organizations report agents taking unintended actions

- Gartner predicts that by 2030, more than 40% of enterprises will experience security or compliance incidents linked to unauthorized shadow AI

Why Principles Fail at Execution Time

A policy PDF is a statement of intent. It can say "employees must not submit confidential data to unapproved AI tools," and every word of that sentence is correct. What it cannot do is prevent the submission. At the moment an employee is about to paste a contract into ChatGPT, the PDF is not in the loop. The only control that matters is one that operates at that moment: a DLP rule, a browser policy, a network block, an awareness prompt.

This is the gap. Principles are upstream. Incidents happen downstream. An AI governance program that is heavy on principles and thin on controls accumulates risk quarter over quarter while reporting progress. For organizations that have already identified the scope of their exposure through a shadow AI discovery sprint, the next question is whether the response is a document or a control.

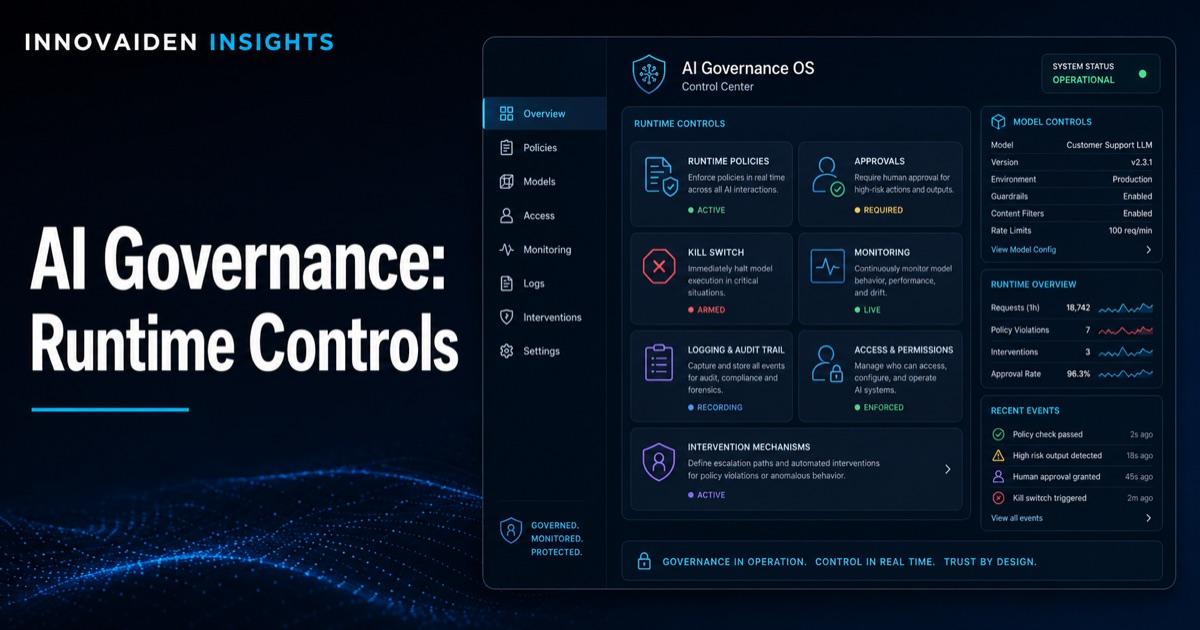

What Runtime AI Governance Looks Like

Four operational capabilities, in combination, convert principle into control:

| Capability | What It Does | Implementation |

|---|---|---|

| Guardrails | Evaluate whether an AI interaction is permitted at the point of use | Input guardrails check what is being sent (PII, secrets, regulated data). Output guardrails check what is being returned (hallucinations, disallowed content, sensitive data echo). |

| Routing | Direct AI requests to appropriate models based on data sensitivity | Sensitive data routes to on-premise or private-tenant deployments. Public data routes to cost-effective external APIs. Agentic actions route through approval layers for regulated systems. |

| Kill switches | Disable AI capabilities within seconds when needed | A specific control: an API endpoint, a feature flag, or an IAM policy change. Without it, an agent taking incorrect action continues until someone figures out how to stop it. |

| Monitoring | Continuously inspect AI behavior against expected patterns | Prompts and responses sampled and reviewed. Agent actions logged and compared to authorized scope. Deviations flagged. Requires AI-specific telemetry that traditional security tools do not capture. |

A Lightweight Control Plane for the Mid-Market

Large enterprises will build full AI governance platforms. Mid-market organizations and PE-backed firms usually do not have the budget, team, or use-case density to justify that build. A lightweight control plane achieves 70 to 80% of the outcome at a fraction of the investment.

The components: an API gateway that all AI traffic routes through, a policy engine that evaluates each request against rules, a logging layer that captures every request and response, and an alerting layer that flags policy violations. A functional version can be in production in 6 to 12 weeks with a small team. For organizations operating under NIS2 and the Swedish Cybersecurity Act, this control plane provides the audit-ready evidence that supervisory bodies increasingly expect.

Phasing: From Experimental to Governed in 6 to 12 Months

| Phase | Months | Deliverable |

|---|---|---|

| Inventory | 0 to 2 | Every AI tool, agent, and API key cataloged. Complete by month 2. |

| Policy | 2 to 4 | Tiered data and use-case policy finalized. Approved patterns documented. Sanctioned tool list published. Enforcement mechanism identified for each policy. |

| Controls | 4 to 8 | Gateway deployed. Guardrails implemented for highest-priority tiers. Routing rules defined. Kill switches in place for agents with production access. |

| Operations | 8 to 12 | Monitoring live. Quarterly review cadence established. Metrics reported to executive committee and board. Incident response runbooks updated. |

This is not a moonshot. It is a program with a defined end state. The alternative, which most organizations are currently on, is to accumulate AI deployments and governance debt until the first material incident forces the work at a worse time. For how the AI agent deployment security framework maps these controls to the six operational domains, runtime governance is the connective layer.

A telemetry standard is now available to plug all of the above into. The OpenTelemetry GenAI semantic conventions reached stable status in early 2026, defining cross-vendor span attributes for LLM calls, agent steps, and tool invocations — including model name, token counts, prompt/response identifiers, and latency. This is the runtime-control plumbing the article describes; before stable conventions, every observability vendor reinvented the schema. Organizations standing up the lightweight control plane in 6–12 months should align their telemetry to OTel GenAI from the start, so the policy engine and audit log can consume a stable, vendor-neutral data shape.

Why This Matters for the Next 18 Months

The regulatory trajectory is clear. Under the Digital Omnibus on AI, EU AI Act high-risk obligations now apply 2 December 2027 for Annex III use cases (Article 6(2)) and 2 August 2028 for Annex I product-route systems (Article 6(1)) — more calendar time, but more documentation to produce. The Commission's 19 May 2026 draft Article 6 classification guidelines set the working interpretation: the four Article 6(3) exceptions are read narrowly, multi-agent systems are classified as a single deployed configuration, and "intended purpose" is decided by instructions, technical documentation, and marketing materials together — not by terms-of-service disclaimers. NIS2 in-scope operators must demonstrate control over cyber risk, and AI agents with access to network and information systems are squarely inside that scope. An organization with runtime AI governance has an audit-ready answer to every supervisory question. An organization with principles has a PDF. For the full read of the draft, see the EU's high-risk AI filter: inside the May 2026 draft guidelines.

The Runtime Governance Reference Architecture includes the 6-week deployment template for the lightweight control plane, the policy engine rule set, and the monitoring telemetry specification.

Build Runtime AI Governance

Innovaiden works with leadership teams deploying AI agents across their organizations, from initial setup and training to security framework alignment and governance readiness. Reach out to discuss how we can help your team.

Get in TouchFrequently Asked Questions

Why do AI governance policies fail at execution time?

A policy PDF is a statement of intent. It can say 'employees must not submit confidential data to unapproved AI tools,' and every word is correct. What it cannot do is prevent the submission. At the moment an employee is about to paste a contract into ChatGPT, the PDF is not in the loop. The only control that matters is one that operates at that moment: a DLP rule, a browser policy, a network block.

What are the four capabilities of runtime AI governance?

Guardrails at the point of use (input/output inspection for PII, secrets, regulated data), routing (directing AI requests to appropriate models based on data sensitivity), kill switches (defined mechanisms to disable AI capabilities within seconds), and monitoring (continuous inspection of AI behavior against expected patterns with AI-specific telemetry).

Can mid-market organizations afford runtime AI governance?

A lightweight control plane achieves 70 to 80% of the outcome at a fraction of enterprise-scale investment. The components are an API gateway for AI traffic, a policy engine, a logging layer, and an alerting layer. A functional version can be in production in 6 to 12 weeks with a small team.

What is the phasing for moving from experimental AI to governed AI?

Months 0 to 2: inventory every AI tool, agent, and API key. Months 2 to 4: finalize tiered data policy, publish sanctioned tool list, identify enforcement mechanisms. Months 4 to 8: deploy gateway, implement guardrails, define routing rules, install kill switches. Months 8 to 12: monitoring live, quarterly review cadence established, metrics reported to board.

What regulatory deadlines make runtime AI governance urgent?

Under the Digital Omnibus on AI, EU AI Act high-risk obligations now apply 2 December 2027 for Annex III use cases (Article 6(2)) and 2 August 2028 for Annex I product-route systems (Article 6(1)). The European Commission's 19 May 2026 draft Article 6 guidelines set the interpretation supervisors will use, reading the four 6(3) exceptions narrowly and assessing multi-agent systems as a single deployed configuration. NIS2 in-scope operators must demonstrate control over cyber risk, and AI agents with access to network and information systems fall squarely inside that scope. An organization with runtime governance has an audit-ready answer. An organization with principles has a PDF.

Related Insights

Sources

- IBM - Cost of a Data Breach Report 2025

- Saviynt - 2026 CISO AI Risk Report

- NHI Management Group - AI Agent Identity Security 2026 Deployment Guide

- Gartner - Top 10 Strategic Predictions 2026. 2025-10-21.

- European Commission - EU AI Act Implementation Timeline

- OpenTelemetry — AI Agent Observability with GenAI Semantic Conventions. Stable, early 2026.

- European Commission — Draft Commission guidelines on the classification of high-risk AI systems. 19 May 2026.