Shadow AI Is Already Inside Your Organization. Here Is How To Find It.

Approximately 78% of employees now bring their own AI tools into the workplace, and only 36% of organizations have formal AI governance policies in place. The gap between adoption and governance is not a future risk. It is a current exposure that most security teams cannot see.

Key Takeaways

- 78% of AI users bring their own tools to work; 45% do not disclose usage to their employer

- Organizations with high levels of shadow AI face breach costs $670,000 higher per incident

- Only 35% of organizations report full visibility into where unstructured data resides

- Companies that provide approved AI alternatives see unauthorized usage drop 89%

Shadow IT Was About Software. Shadow AI Is About Data.

The original shadow IT problem was relatively contained. An employee installed Dropbox or used a personal Trello board. The risk was primarily about unsanctioned software and ungoverned file sharing. Security teams could discover it through network monitoring and endpoint management.

Shadow AI is structurally different. When an employee pastes a client's financial model into ChatGPT to reformat a table, or uploads an internal strategy document to Claude to generate a summary, the data leaves the organization's control perimeter entirely. Unlike shadow IT, which moved files between storage locations, shadow AI moves context, logic, and proprietary information into third-party systems that the organization cannot audit, cannot retrieve from, and in many cases cannot even detect.

The following table illustrates the structural differences:

| Dimension | Shadow IT | Shadow AI |

|---|---|---|

| What moves | Files between storage locations | Context, logic, and proprietary information |

| Detection method | Network monitoring, endpoint management | SSL inspection, OAuth audit, expense analysis |

| Data residency | Known (cloud storage providers) | Unknown (AI provider training pipelines) |

| Retrieval | Possible (files can be deleted remotely) | Impossible (data may be retained in model weights) |

| Access pattern | 47% via personal accounts | 47% via personal accounts, plus embedded AI in sanctioned SaaS |

The challenge is compounded by how these tools are accessed. According to Netskope, 47% of generative AI users access tools through personal accounts, completely bypassing enterprise identity and access controls. Standard firewall rules and network monitoring cannot inspect the content of HTTPS interactions without SSL inspection, a control many organizations have not deployed for AI traffic.

Why Existing Policies Fail

Most organizations that have AI policies wrote them for the previous generation of the problem. They address whether employees may use AI tools. They do not address what data flows into those tools, which tools are embedded in existing SaaS applications, or how to govern AI features that vendors are quietly enabling inside products the organization already uses.

By 2026, an estimated 70% of employee interactions with AI will occur through features embedded in sanctioned SaaS applications, making it significantly harder for IT to distinguish between approved and unapproved usage. The AI is no longer a separate application an employee downloads. It is inside the tools they already have permission to use. For the broader context on how AI data governance connects to frameworks organizations already have, the shadow AI discovery problem is a prerequisite step.

This means discovery requires more than network monitoring. It requires understanding what data is being processed by AI features within approved platforms, not just tracking standalone AI tool usage.

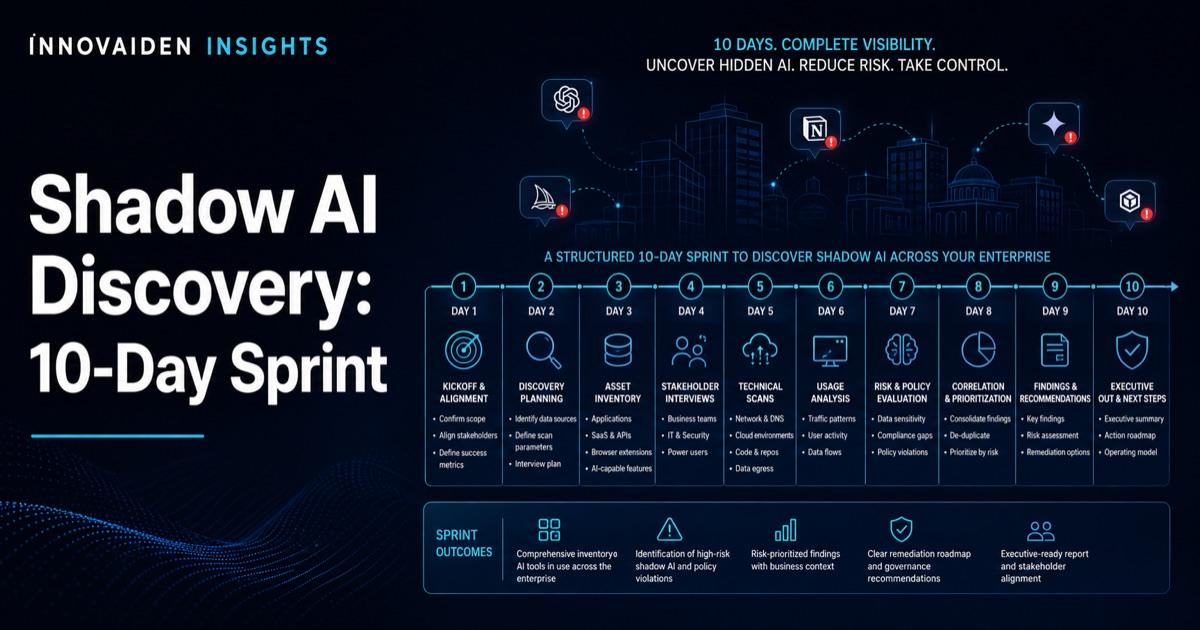

The 10-Day Discovery Sprint

A practical starting point for any organization is a focused discovery sprint. This is not a full governance program. It is a visibility exercise designed to answer one question: what AI tools are touching our data right now?

| Sprint Phase | Days | Focus | Activities |

|---|---|---|---|

| Expense and procurement review | 1 to 3 | Financial records | Pull expense reports and corporate card transactions for past 6 months. Search for subscriptions to OpenAI, Anthropic, Midjourney, Jasper, Copy.ai, Perplexity, and similar. Check procurement records for purchases outside IT. |

| Identity and access audit | 4 to 6 | IAM and OAuth | Review OAuth grants in identity provider. Audit API keys issued in past 12 months. Review browser extension inventories across managed endpoints. |

| Network and data flow analysis | 7 to 9 | Traffic and DLP | Analyze outbound traffic logs for known AI service domains. Review SSL inspection content patterns for bulk uploads. Review DLP alerts for AI-related data transfers. |

| Classification and decision | 10 | Triage | Categorize every discovered tool: endorse (approve with controls), restrict (allow with data handling rules), or remove (high-risk/non-compliant). Map each tool to data types accessed. |

Banning Does Not Work. Governing Does.

Research consistently shows that blanket AI bans drive usage underground. Nearly half of employees report they would continue using personal AI accounts even after an organizational ban. The more effective approach is to provide sanctioned alternatives that match or exceed the functionality of what employees are using on their own.

Unauthorized AI usage reduction with approved alternatives

Healthcare Brew / Vectra AI, 2026

Organizations that deployed enterprise-grade AI tools with proper data controls saw unauthorized usage drop dramatically. The investment in approved tooling is not just a productivity decision. It is a security control. For organizations evaluating how to deploy AI agents with appropriate security controls, the governance layer starts with knowing what is already in use.

The Shadow AI Discovery Playbook covers the complete discovery sprint methodology, a tool classification framework, policy templates for each tier, and a data flow risk assessment methodology.

Run a Shadow AI Discovery Sprint

Innovaiden works with leadership teams deploying AI agents across their organizations, from initial setup and training to security framework alignment and governance readiness. Reach out to discuss how we can help your team.

Get in TouchFrequently Asked Questions

What is shadow AI and how is it different from shadow IT?

Shadow IT involved employees installing unsanctioned software like Dropbox or personal Trello boards. Shadow AI is structurally different: when an employee pastes client data into ChatGPT or uploads internal documents to Claude, the data leaves the organization's control perimeter entirely. Unlike shadow IT which moved files between storage locations, shadow AI moves context, logic, and proprietary information into third-party systems the organization cannot audit or retrieve from.

How widespread is unauthorized AI use in the workplace?

Approximately 78% of employees now bring their own AI tools into the workplace, and 45% do not disclose usage to their employer. Meanwhile, only 36% of organizations have formal AI governance policies in place. The gap between adoption and governance creates a current exposure that most security teams cannot see.

What does a 10-day shadow AI discovery sprint involve?

Days 1 through 3 cover expense and procurement review for AI subscriptions. Days 4 through 6 focus on identity and access audit, reviewing OAuth grants and API keys. Days 7 through 9 analyze network traffic and DLP alerts for AI-related data transfers. Day 10 classifies every discovered tool into three tiers: endorse, restrict, or remove.

Why do blanket AI bans fail as a governance strategy?

Research consistently shows that blanket AI bans drive usage underground. Nearly half of employees report they would continue using personal AI accounts even after an organizational ban. Organizations that deployed enterprise-grade AI tools with proper data controls saw unauthorized usage drop 89%. The investment in approved tooling functions as a security control, not just a productivity decision.

How much more do data breaches cost when shadow AI is involved?

Organizations with high levels of shadow AI face breach costs $670,000 higher per incident compared to organizations with governed AI usage. This premium reflects the expanded attack surface, the difficulty of incident investigation when data flows are unknown, and the regulatory penalties for uncontrolled data processing.

Related Insights

Sources

- SQ Magazine - Shadow AI Usage Statistics 2026

- IBM - 2025 Cost of Data Breach Report

- Cloud Security Alliance - 82% of Enterprises Have Unknown AI Agents Survey. 2026-04-21.

- Vectra AI - Shadow AI Risk Analysis

- Gartner - Embedded AI Adoption Forecasts, via JumpCloud

- Netskope - Generative AI Usage Patterns Report